Radiance Caching for efficient Global Illumination Computation

Abstract

Radiance Caching is a ray tracing-based method for accelerated global illumination computation in scenes with low-frequency glossy BRDFs. The method is based on sparse sampling, caching, and interpolating radiance on glossy surfaces. In particular we extend the irradiance caching scheme proposed by Ward et al. in 1988 to cache and interpolate directional incoming radiance instead of irradiance. The incoming radiance at a point is represented by a vector of coefficients with respect to a spherical or hemispherical basis.

The work has been done in collaboration with IRISA / INRIA Rennes, France and the University of Central Florida, USA (see the radiance caching page at UCF).

|

|

|

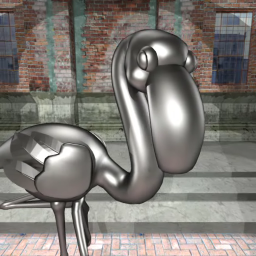

Fig. 1: Importance of indirect illumination for proper material perception. With indirect illumination (left), the flamingo looks as made of metal (which it indeed is), whereas with direct illumination only (right) it looks as though made of a dark plastic. |

Radiance Caching

The basic idea of radiance caching is to compute indirect illumination only at certain locations and then interpolate it elsewhere, as can be seen in Figure 1. Indirect illumination at a point is computed by hemisphere sampling, using 500-5000 rays, and stored in the radiance cache represented by spherical harmonics. Later, the stored values can be reused through interpolation.

|

|

|

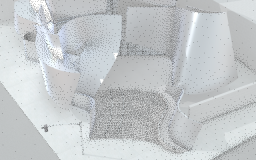

Fig. 2: In radiance caching, indirect illumination is interpolated from a sparse set of illumination values stored in the radiance cache. Left: Positions of cached illumination values. Right: Resulting interpolated illumination. (Click to enlarge.) |

Radiance Gradient Computation

To improve interpolation quality, radiance gradients are computed during hemisphere sampling and stored in the cache. In [1] and [3], we propose two novel methods for computing the gradient, each having different pros and cons.

|

|

|

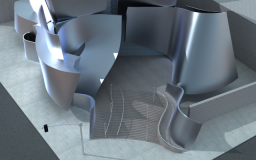

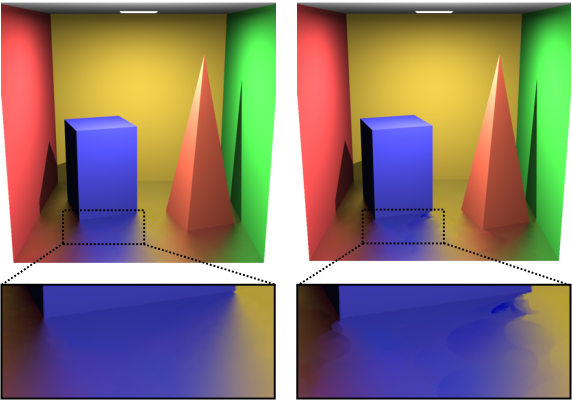

Fig. 3: Radiance gradient significantly improves the smoothness of the interpolated illumination on the glossy floor. Left: With gradient. Right: No gradient. |

Coordinate Frame Alignment - Fast Spherical Harmonic Rotation

When interpolating illumination on curved surfaces, care must be taken to properly align the (different) local coordinate frames, with respect to which the cached illumination is represented. This is achieved through spherical harmonics rotation. In [4], we propose a fast approximate procedure for spherical harmonics rotation.

Quality and Robustness Improvements - Adaptive Caching and Neighbor Clamping

To improve reliability if both irradiance and radiance caching, we propose in [5] the neighbor clamping heuristic, which equalizes the spatial distribution of cached illumination values thereby improving the image quality. Adaptive Caching, is designed to adapt the distribution of cached samples on glossy surfaces to the actual illumination conditions in the scene, with the purpose of avoiding interpolation artifacts.

|

Fig. 4: Adaptive caching (left) suppresses the interpolation artifact that may occur with the original radiance caching algorithm (right). |

GPU Implementation - Radiance Cache Splatting

We reformulated radiance and irradiance caching to make it more suitable for GPU implementation. Hemisphere sampling at a point is performed by the GPU using hemicube rasterization. The computed radiance is then directly splatted on the screen. This avoids searching for records in the octree data structure, as in the original radiance caching. The implementation delivers is high quality, first bounce global illumination at interactive frame rates.

Video

Radiance Caching:

|

Final radiance caching video (35MB)

Compares radiance caching to Monte Carlo sampling. Monte Carlo results suffer from high frequency noise. |

|

Flamingo A side-by side comparison of radiance caching (left) and Monte Carlo sampling (right). |

|

Walt Disney Concert Hall - high quality

rendering with radiance caching Designed by Frang Gehry, Walt Disney Concert Hall is located in Los Angels, CA. See this picture. |

Radiance Cache Splatting:

Source Code

Code is in C++. You are free to use it for commercial and non-commercial purposes. If you do use it, please, acknowledge this web page. Mind this is a reserch code - much of it is useless for practical purposes.

libsphar.zip

A spherical and hemispherical harmonics library.

Supports storage of coefficents vectors, evaluation of basis functions, various implementations of spherical harmonics rotation (see rotation-howto.txt). Operation of coefficients vectors implemented using SIMD.

rcache.zip

Radiance caching related sources from the GOLEM renderer.

You cannot compile these files as they are, since you would need the rest of GOLEM, which unfortunately is not open source. However, it should be pretty straightforward to port this to your renderer. Start reverse engineering from rt17app and rt19app, which are the actual rendering applications based on irradiance and radiance caching, respectively.

Presentations

| [1] |

2004: Jaroslav Křivánek, Pascal

Gautron, Sumanta Pattanaik,

Kadi Bouatouch Radiance Caching for Efficient Global Illumination Computation in 3rd International Radiance Workshop (2004), Fribourg, Switzerland, October 11-12, 2004. |

| [2] |

2004: Jaroslav Křivánek Spherical Harmonics Lighting: Fundamental in Graphics Group Seminar, UCF, Orlando, October 22, 2004. |

Ph.D. Thesis

| [1] |

2005: Jaroslav Křivánek Radiance Caching for Global Illumination Computation on Glossy Surfaces PhD Thesis, Université de Rennes 1 and Czech Technical University in Prague, December 2005. Links: Thesis page / Thesis (PDF) / Defence Talk Slides (PPT) / BibTeX entry (BIB) / References (BIB) |

Publications

| [1] |

2005: Jaroslav Křivánek, Pascal

Gautron , Sumanta Pattanaik,

and Kadi Bouatouch Radiance Caching for Efficient Global Illumination Computation IEEE Transactions on Visualization and Computer Graphics , Vol. 11, No. 5, September/October 2005 Links: Article (PDF) / BibTeX entry (BIB) |

| [2] |

2005: Pascal Gautron ,

Jaroslav Křivánek,

Kadi Bouatouch, and

Sumanta Pattanaik Radiance Cache Splatting: A GPU-Friendly Global Illumination Algorithm In Rendering Techniques, Proceedings of Eurographics Symposium on Rendering, p. 55-64. June 2005. Links: Paper (PDF) / BibTeX entry (BIB) / References (BIB) / Presentation (PPT) |

| [3] |

2005: Jaroslav Křivánek,

Pascal Gautron ,

Kadi Bouatouch, and

Sumanta Pattanaik Improved Radiance Gradient Computation (Paper in Conference Proceedings) In Proceedings of Spring Conference on Computer Graphics SCCG 2005, ACM Press, 2005, p.155-159. Links: ACM Digital Library / Paper (PDF) / BibTeX entry (BIB) / References (BIB) / Presentation (PPT) |

| [4] |

2006: Jaroslav Křivánek, Jaakko

Konttinen, Sumanta Pattanaik,

Kadi Bouatouch, and

Jiří Žára Fast Approximation to Spherical Harmonic Rotation SCCG '06: Proceedings of the 22nd Spring conference on Computer graphics. Links: Paper (PDF) / BibTeX entry (BIB) / References (BIB) / Presentation (PPT) (Also a SIGGRAPG 2006 Sketch) |

| [5] |

2006: Jaroslav Křivánek,

Kadi Bouatouch,

Sumanta Pattanaik, and

Jiří Žára Making Radiance and Irradiance Caching Practical: Adaptive Caching and Neighbor Clamping In Rendering Techniques, Proceedings Eurographics Symposium on Rendering, June 2006. Links: Paper (PDF) / BibTeX entry (BIB) / References (BIB) / Presentation (ZIP) |

Acknowledgement

This work was partially supported by

-

ATI Research, I-4 Matching fund and Office of Naval Research,

-

France Telecom R&D, Rennes, France,

-

Ministry of Education of the Czech Republic under the research programs LC-06008 (Center for Computer Graphics) and MSM 6840770014.