Hello,

I am a Ph.D. student at Computer Graphics Group under the supervision of Alexander Wilkie, formerly Jaroslav Křivánek.

In general, I focus on visual computing, which is a computer science field encompassing real-time and offline computer graphics, image processing, appearance fabrication, 3D printing, and more.

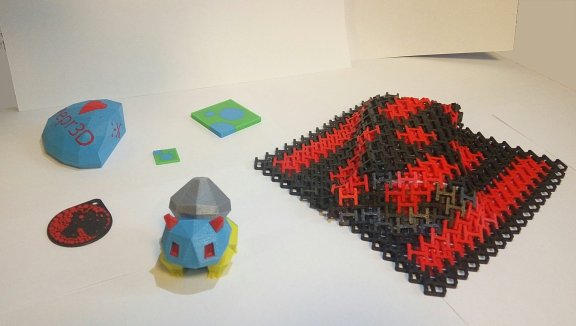

In particular, my current Ph.D. research aims to enable highly-accurate full-color 3D printing, which is a part of the predictive rendering pipeline.

That is critical for everyone relying on 3D appearance manufacturing and visual prototyping such as designers, movie studios, architects, or personalized print services.

The full list of my publications follows. You can also visit my Google Scholar profile.

December 2022 • ACM Transactions on Graphics (TOG)

Journal Publication

ACM SIGGRAPH Asia 2022

We present a spectral measurement approach for the bulk optical properties of translucent materials using only low-cost components. We focus on the translucent inks used in full-color 3D printing, and develop a technique with a high spectral resolution, which is important for accurate color reproduction. We enable this by developing a new acquisition technique for the three unknown material parameters, namely, the absorption and scattering coefficients, and its phase function anisotropy factor, that only requires three point measurements with a spectrometer. In essence, our technique is based on us finding a three-dimensional appearance map, computed using Monte Carlo rendering, that allows the conversion between the three observables and the material parameters. Our measurement setup works without laboratory equipment or expensive optical components. We validate our results on a 3D printed color checker with various ink combinations. Our work paves a path for more accurate appearance modeling and fabrication even for low-budget environments or affordable embedding into other devices.

July 2022 • Eurographics Symposium on Rendering (EGSR)

Conference Paper

EGSR 2022

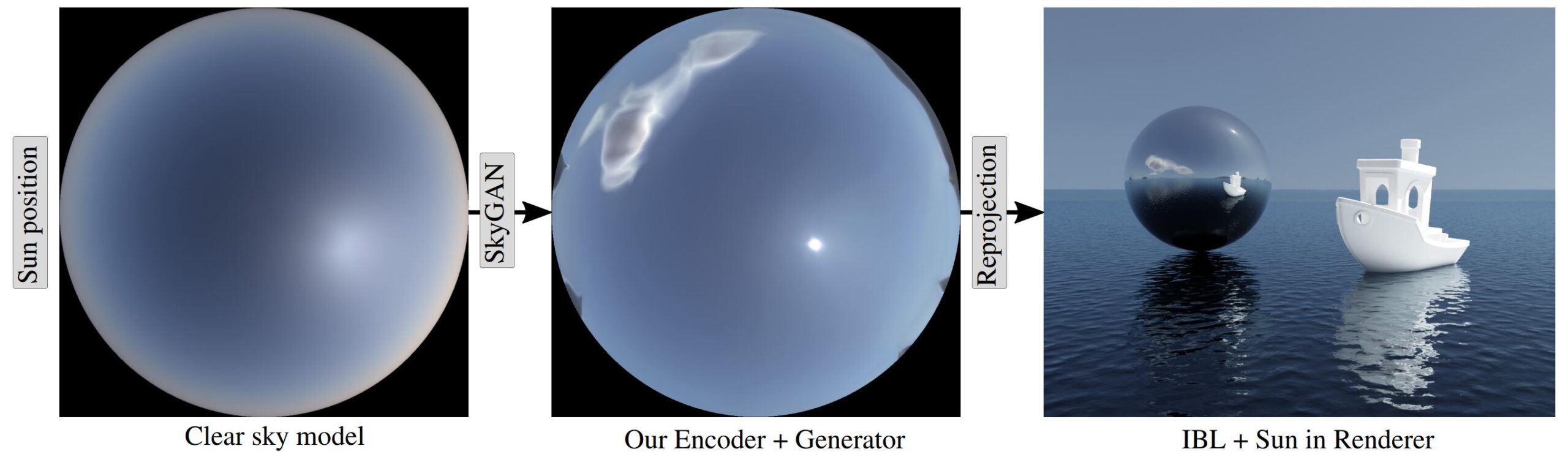

Achieving photorealism when rendering virtual scenes in movies or architecture visualizations often depends on providing a realistic illumination and background. Typically, spherical environment maps serve both as a natural light source from the Sun and the sky, and as a background with clouds and a horizon. In practice, the input is either a static high-resolution HDR photograph manually captured on location in real conditions, or an analytical clear sky model that is dynamic, but cannot model clouds. Our approach bridges these two limited paradigms: a user can control the sun position and cloud coverage ratio, and generate a realistically looking environment map for these conditions. It is a hybrid data-driven analytical model based on a modified state-of-the-art GAN architecture, which is trained on matching pairs of physically-accurate clear sky radiance and HDR fisheye photographs of clouds. We demonstrate our results on renders of outdoor scenes under varying time, date, and cloud covers.

August 2021 • ACM Transactions on Graphics (TOG)

Journal Publication

ACM SIGGRAPH 2021

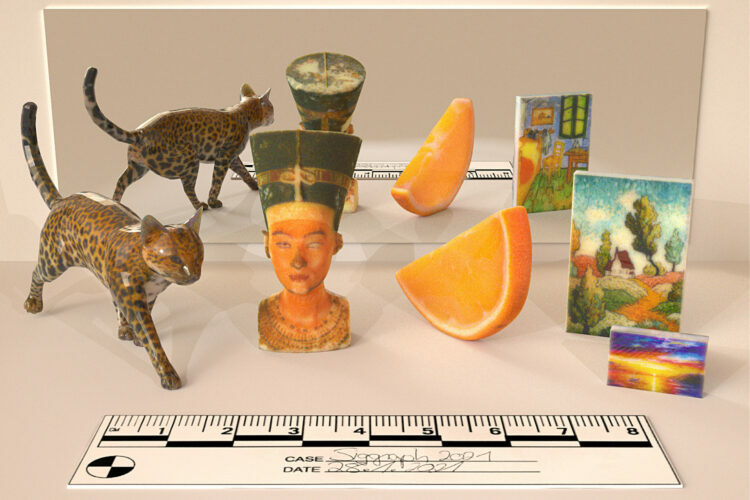

In full-color inkjet 3D printing, a key problem is determining the material configuration for the millions of voxels that a printed object is made of. The goal is a configuration that minimises the difference between desired target appearance and the result of the printing process. So far, the techniques used to find such a configuration have relied on domain-specific methods or heuristic optimization, which allowed only a limited level of control over the resulting appearance.

We propose to use differentiable volume rendering in a continuous materialmixture space, which leads to a framework that can be used as a general tool for optimising inkjet 3D printouts. We demonstrate the technical feasibility of this approach, and use it to attain fine control over the fabricated appearance, and high levels of faithfulness to the specified target.

August 2021 • ACM Transactions on Graphics (TOG)

Journal Publication

ACM SIGGRAPH 2021

We present a fitted model of sky dome radiance and attenuation for realistic terrestrial atmospheres. Using scatterer distribution data from atmospheric measurement data, our model considerably improves on the visual realism of existing analytical clear sky models, as well as of interactive methods that are based on approximating atmospheric light transport. We also provide features not found in fitted models so far: radiance patterns for post-sunset conditions, in-scattered radiance and attenuation values for finite viewing distances, an observer altitude resolved model that includes downward-looking viewing directions, as well as polarisation information. We introduce a fully spherical model for in-scattered radiance that replaces the family of hemispherical functions originally introduced by Perez e.a., and which was extended for several subsequent analytical models: our model relies on reference image compression via tensor decomposition instead.

September 2019 • Charles University

Tomáš Iser, Jan Horáček (supervisor)

Publication

Master Thesis

star Best Software Master Thesis 2019

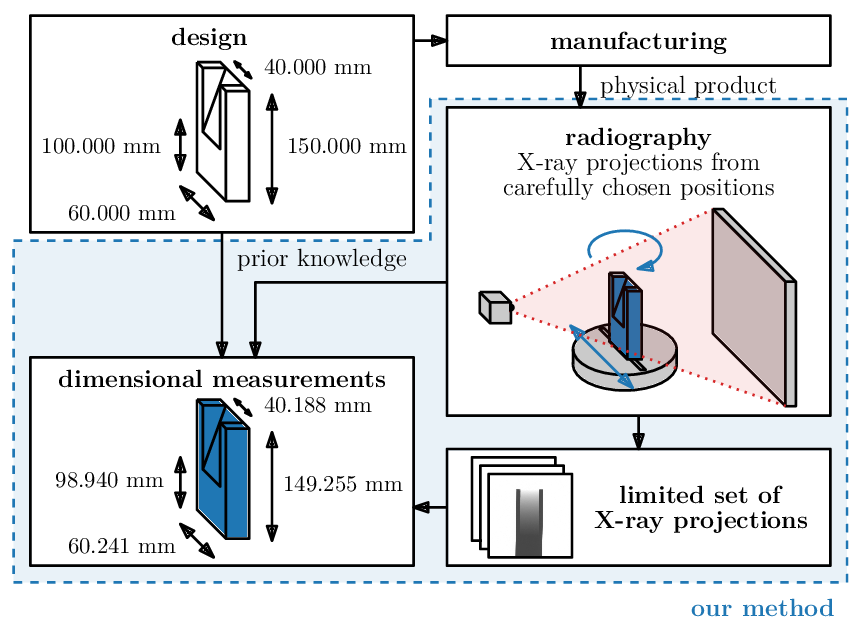

Modern non-destructive approaches for quality control in manufacturing often rely on X-ray computed tomography to measure even difficult-to-reach features. Unfortunately, such measurements require hundreds or thousands of calibrated X-ray projections, which is a time-consuming process and may cause bottlenecks. In the recent state-of-the-art research, tens and hundreds of projections are still required.

In this thesis, we examine the radiography physics, technologies, and existing solutions, and we propose a novel approach for non-destructive dimensional measurements from a limited number of projections. Instead of relying on computed tomography, we formulate the measurements as a minimization problem in which we compare our parametric model to reference radiographs. We propose the whole dimensional measurements pipeline, including object parametrizations, material calibrations, simulations, and hierarchical optimizations. We fully implemented the method and evaluated its accuracy and repeatability using real radiographs of real physical objects. We achieved accuracy in the range of tens or hundreds of micrometers, which is almost comparable to industrial computed tomography, but we only used two or three reference radiographs. These results are significant for industrial quality control. Acquiring two or three radiographs only takes a couple of seconds, so we significantly reduce the X-ray machine time and the time required to detect manufacturing errors.

April 2019 • Charles University

Software Project

Publication

Student Conference

star 1st Best Paper

star 2nd Best Presentation

star Best Video

Our focus is on the real-time rendering of large-scale volumetric participating media, such as fog. Since a physically correct simulation of light transport in such media is inherently difficult, the existing real-time approaches are typically based on low-order scattering approximations or only consider homogeneous media.

We present an improved image-space method for computing light transport within quasi-heterogeneous, optically thin media. Our approach is based on a physically plausible formulation of the image-space scattering kernel and analytically integrable medium density functions. In particular, we propose a novel, hierarchical anisotropic filtering technique tailored to the target environments within homogeneous media. Our parallelizable solution enables us to render visually convincing, temporally coherent animations with fog-like media in real time, in a bounded time of only milliseconds per frame.

June 2017 • Charles University

Publication

Bachelor Thesis

star Dean’s Prize

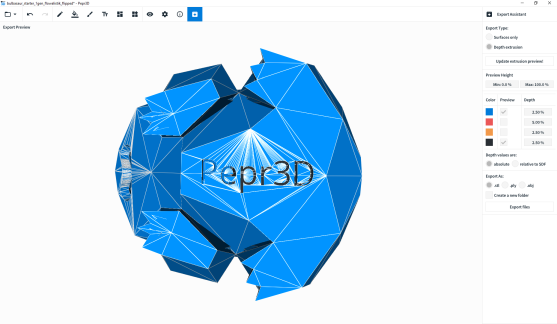

The focus of this thesis is the real-time rendering of participating media, such as fog. This is an important problem, because such media significantly influence the appearance of the rendered scene. It is also a challenging one, because its physically correct solution involves a costly simulation of a very large number of light-particle interactions, especially when considering multiple scattering. The existing real-time approaches are mostly based on empirical or single-scattering approximations, or only consider homogeneous media.

This work briefly examines the existing solutions and then presents an improved method for real-time multiple scattering in quasi-heterogeneous media. We use analytically integrable density functions and efficient MIP map filtering with several techniques to minimize the inherent visual artifacts. The solution has been implemented and evaluated in a combined CPU/GPU prototype application. The resulting highly-parallel method achieves good visual fidelity and has a stable computation time of only a few milliseconds per frame.